Instagram will now warn you before disabling your account

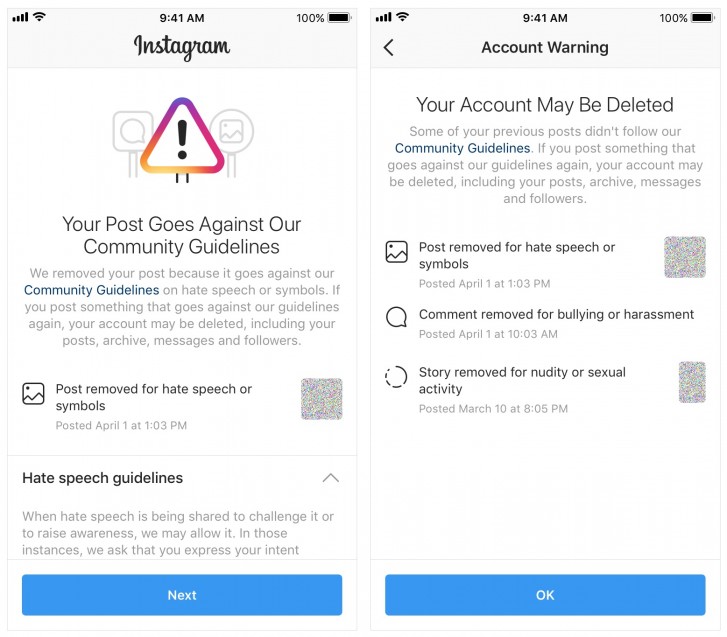

Facebook-owned Instagram has received a lot of flak in the past for disabling accounts without any prior notice. Now, the company has changed its policies and has committed to warning users before disabling their accounts.

Instagram users will receive in-app notifications when their accounts are at the risk of getting disabled for violating the community guidelines about nudity, pornography, bullying, harassment, hate speech, drug sales, and terrorism.

Users will also get a chance to appeal deleted content and disabled accounts from within the app instead of having to go through the Help Center.

Instagram also said that in addition to removing accounts with a certain percentage of violating content, it will "remove accounts with a certain number of violations within a window of time"; similar to how policies are enforced by its parent company Facebook.

Last week, Instagram rolled out AI-powered anti-bullying features, and with today's update, the company aims to keep its platform "a safe and supportive place."

Reader comments

- Jason

- r@5

Instagram censorship is a bad idea. Yeah, nudity and violence are obvious censors, but insta is banning news and idea of thought that don’t align with their radical views. In 2016, Tech giants said they were going to ban conservative views, and that’...

- AsianPutin

- KZ8

"Did belle delphine's account get deleted?" "yes" "what did it cost?" "installing Instagram"

- Jasson

- tZk

You must post full nude picture then only the AI will detect them as nude. But when your post picture of pussy or dick drawing the AI failed to sense it.😂

Tip us

1.7m 126k

RSS

EV

Merch

Log in I forgot my password Sign up