Intel just acquired fellow chip maker Altera - plans to leverage its know-how for smarter chips

Intel just completed the biggest acquisition in its corporate history – a $16.7 billion deal to buy the US-based Altera. Тhe company is largely unknown in the end-user realm and understandably so, as it specializes in delivering programmable logic devices (PLDs) and mostly what is knowns as field programmable gate arrays (FPGAs).

We won't be going into too much technical detail, but a simple introduction to the technology in question and why it is important is necessary. Most of the processors we have grown accustomed to in our lives are versatile, general purpose units that can perform many tasks. This goes for Intel's chips as well as the silicon found inside our smartphones. However, in some cases, you don't really need an all-encompassing solution, but rather a more efficient custom one designed for one purpose. This is where application-specific integrated circuit (ASIC) come in. Right in the middle ground there are FPGAs, which allow some logical reconfiguring at the client side depending on current needs.

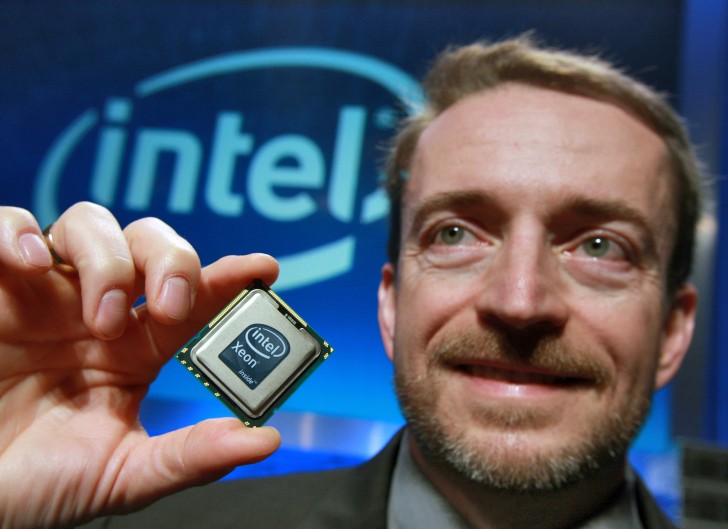

Intel hopes that it can leverage this technology to better meet the needs of its corporate clients like Facebook, Google and Microsoft, specifically to improve complex tasks like facial and other pattern recognition. Said companies already use Intel's Xeon server processors, so it makes a lot of sense to strive for complete end-to-end solutions. In fact, the company's long-term vision is to actually combine both tech in a single chip solution, but as CEO Brian Krzanich explains, that is still a little way off. In the meantime, starting 2016, Intel will be offering Altera chips alongside its own lineup.

Reader comments

- AnonD-3678

- y$g

I still remember using altera 13 years ago to design usb hub controller. Their simulator was very accurate. On Pentium 3 with 128 MB RAM, the vhdl codes took 5 minutes to compile and 10 minutes to simulate then transfer the code to the altera bo...

- boiWAMBO

- Kg{

Altera is a pretty popular chip supplier for test equipment (more specifically test boards or load boards) of semicon companies.

- AnonD-107998

- tDC

Altera FPGA kits is pretty popular in IT School, mainly because of its use as a basic (to advance) tools to understand how computer work. Not just theoretically, but also practically.

Tip us

1.7m 126k

RSS

EV

Merch

Log in I forgot my password Sign up